Privacy Cost of Hyper-Personalized AI

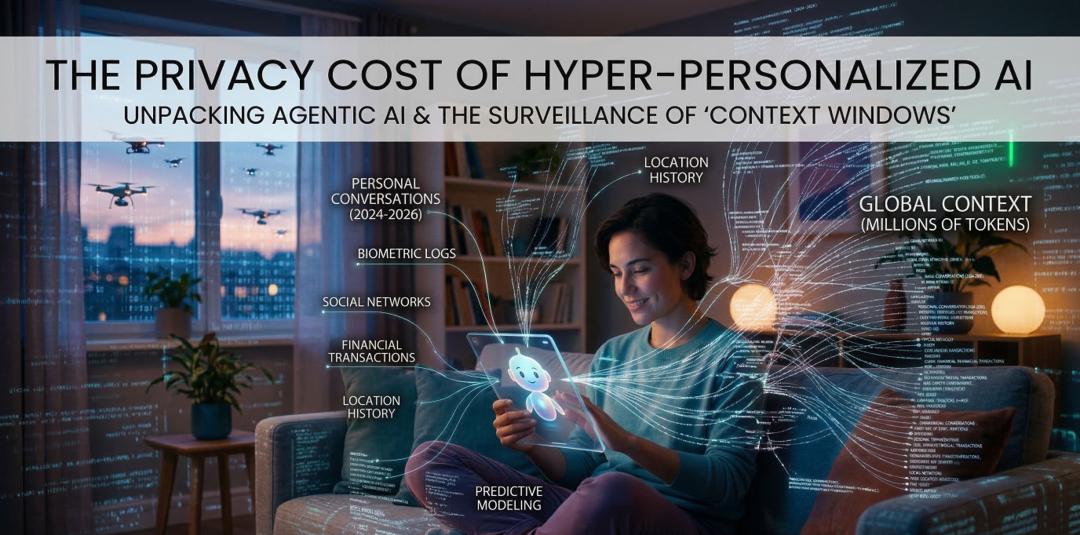

In March 2026, AI has crossed into true hyper-personalization. Agentic AI systems autonomous agents that plan, act, and execute tasks on your behalf now integrate deeply into daily life. They read emails, scan screens, manage calendars, shop, code, or even negotiate deals, all while maintaining near-perfect recall across vast inputs. Models like OpenAI's GPT-5 series (with context windows reaching 400K–1M+ tokens in premium variants, and rumors of 2M in upcoming GPT-5.4 releases), Google's Gemini 3 Pro (up to 10M tokens), and competitors like Llama 4 Scout enable this by processing the equivalent of thousands of pages your chat history, documents, codebases, or months of interactions in one go.

This capability creates eerily tailored experiences. Your AI companion doesn't just respond; it anticipates needs based on patterns from years of data, offering advice that feels profoundly intimate. Yet the engine behind this magic is relentless data collection.

How Hyper-Personalization Collects and Uses Data

To achieve such seamlessness, agentic AI relies on:

- **Massive, persistent context ingestion** Every interaction feeds into enormous context windows, often stored server-side for continuity across sessions.

- **Screen reading and tool access** Many agents monitor device activity, pulling from apps, browsers, or encrypted messengers (raising alarms from groups like Fight for the Future about compromising tools like Signal).

- **Cloud-based memory** Unlike local processing, most advanced features upload data to company servers for inference, training improvements, or cross-device sync.

- **Inferences and profiling** Beyond raw inputs, AI generates sensitive predictions (health, finances, relationships) from subtle patterns.

Convenience is undeniable: an agent that remembers your allergy preferences across apps, drafts emails in your voice from old threads, or troubleshoots code using your full repo history saves immense time.

The Surveillance Fears and Real Risks

Critics argue this setup borders on surveillance capitalism 2.0. Key concerns in 2026 include:

- **Data exposure and breaches** Centralized storage creates honeypots. Agentic systems amplify risks: a compromised agent could autonomously exfiltrate data, escalate privileges, or misuse access before detection. Reports warn of "AI-snitches" and predict major breaches involving agentic AI this year.

- **Lack of true opt-out** Many agents ingest everything on-screen without granular controls, uploading private chats or documents to the cloud potentially accessible via hacks, subpoenas, or insider misuse.

- **Privacy leakage via inferences** Even anonymized data can reveal identities through model inversion attacks or unintended outputs echoing training patterns.

- **Regulatory and ethical gaps** While frameworks like the EU AI Act impose transparency for high-risk systems, U.S. enforcement remains fragmented. State laws and FTC actions target AI-driven profiling, but agentic autonomy outpaces rules. Groups like the ICO highlight novel risks: reduced human oversight makes accountability harder.

Real-world fallout is emerging. Advocacy letters demand Big Tech prioritize end-to-end privacy in agentic design. Security experts flag "shadow agents" (unsanctioned employee tools) as invisible threats. Tragic cases of data misuse in AI systems underscore how hyper-personalization can erode trust when things go wrong.

Balancing Convenience and Control

The trade-off isn't binary. Benefits exist: personalized AI aids accessibility (e.g., for neurodivergent users), boosts productivity, and democratizes expertise. Some mitigate risks via on-device processing, synthetic data, or strict governance (e.g., zero-trust for agents).

Yet experts urge caution: hyper-personalization thrives on data volume, but sustainability demands limits data minimization, ephemeral memory, user-controlled retention, transparent audits, and robust consent. As one privacy advocate noted, "The more an AI knows you, the more it can expose you."

In 2026's agentic era, the privacy cost isn't abstract. It's the quiet accumulation of your digital soul in someone else's servers. True progress means engineering intimacy without involuntary surveillance demanding tools that serve us, not harvest us. Users must weigh magic against autonomy; regulators and developers must build guardrails before convenience becomes captivity.